Your fintech is processing card transactions. You’re using OpenAI’s API for transaction classification, fraud detection, and customer support. It costs no less than €1k/month. Your compliance team asks a question: if OpenAI becomes unavailable tomorrow, what happens to your critical functions?

You don’t have a good answer. That’s a DORA problem now.

DORA requires financial services firms to document exit strategies for any external vendor supporting critical functions. An exit strategy isn’t a document saying “we could probably move to Azure.” It’s a tested procedure showing you’ve actually migrated representative data, measured the time it took, and validated that operations continued. If OpenAI is your only AI vendor and you’ve never tested a migration away from it, your regulator will classify that as a finding.

The same logic applies to any SaaS AI platform. Anthropic, Mistral Cloud, proprietary models. If the vendor is the only source of that capability, you’re concentrated on a single vendor. That concentration carries risk DORA now requires you to manage.

But there’s an architectural alternative that’s become viable in early 2026: self-hosted AI on EU infrastructure, paired with open-source models and proper network isolation. Before getting into the architecture, it’s worth understanding why this isn’t just a cost play.

Why Self-Hosted is a Regulatory Story, Not Just a Cost Story

The headline argument for self-hosting is usually cost: “SaaS API bills vs bare-metal inference.” That’s real, but it’s the wrong lead.

Cost savings matter, but regulatory compliance matters more for regulated financial services. And here’s what the compliance picture looks like.

DORA applies from 17 January 2025. It requires financial institutions to assess concentration risk, test exit strategies, and maintain documented inventory of critical functions and the vendors that support them. The regulation doesn’t say “use EU providers” or “self-host.” It says “manage your dependencies, understand your risks, and prove you can survive if a vendor fails.”

SaaS AI creates a specific problem: you depend on a US-based vendor (OpenAI, Anthropic, Google) for a critical function, and your exit path is “switch to a different US vendor, which takes weeks or months.” Your regulator knows this. When they examine you and ask “what happens if your vendor is unavailable,” the answer “we switch to Azure” isn’t an exit strategy. That’s vendor roulette.

Self-hosted AI on EU infrastructure with open-source models flips the dynamic. Your exit strategy is operational: “we’ve tested this, we know it takes X hours, we have the data and the code, we can run it.” That’s something a regulator can audit.

Second driver: GDPR Article 32 requires “appropriate” security measures including encryption, pseudonymization, access control, and regular testing. The word “appropriate” is risk-proportionate, not prescriptive. For transaction data or payment processing, financial regulators interpret “appropriate” as very serious indeed. Self-hosted infrastructure with customer-managed encryption is arguably more compliant than SaaS APIs where encryption keys are vendor-managed and audit visibility is limited.

Third driver: the EU AI Act (Regulation (EU) 2024/1689, the EU’s comprehensive framework for regulating artificial intelligence systems by risk level). Its main high-risk obligations apply from 2 August 2026, with earlier phases already in force for prohibited practices (from 2 February 2025) and general-purpose AI model obligations (from 2 August 2025), and a longer transition until 2 August 2027 for high-risk systems embedded in regulated products. For financial services, the part that matters is the high-risk regime, which reaches AI that supports credit decisions, underwriting, or other regulated determinations. From 2 August 2026 the Article 13 transparency duties (information to deployers) and the Article 50 transparency obligations (disclosure about AI interactions and AI-generated content) apply, on top of the Article 17 Quality Management System obligation that wraps around them. In plain terms: you need to be able to explain, at the depth the regulation requires, how the system works, what data informs its behaviour, and how it is governed. Proprietary SaaS models make that harder to evidence. You can’t open the box. Self-hosted models (Llama, Mistral) are at least inspectable. You control the container.

Cost savings are real, but the regulatory alignment is the actual value driver. You’re not choosing self-hosted because it’s cheaper. You’re choosing it because it’s compliant by design.

The Architecture: VPC Isolation + EU Infrastructure + Open-Source AI

A production-ready self-hosted AI stack for financial services has three components.

Component 1: EU Infrastructure. You need a server running in EU datacenters, with provider certifications that your regulator recognizes. Three proven options:

Hetzner (Germany) is ISO 27001:2022 certified, operates exclusively in EU datacenters (Nuremberg, Falkenstein, Helsinki). On the dedicated line, its published GPU matrix starts at €184/month for an RTX 4000 SFF Ada (GEX44) and €838/month for an RTX 6000 Ada (GEX130), plus one-time setup, per the Hetzner dedicated GPU matrix (checked 21 April 2026). A100-class capacity is offered through Hetzner’s GPU cloud at a separate price point, reported at roughly €2.49/hour (~€1,820/month for continuous use); verify the current tier against Hetzner’s cloud pricing page before committing. No SecNumCloud certification (that’s France-specific), but Hetzner’s German incorporation and ISO 27001 audit satisfy SEAL-2 requirements.

OVHcloud (France) holds the SecNumCloud 3.2 qualification, the ANSSI (the French national cybersecurity agency, Agence nationale de la sécurité des systèmes d’information) gold standard that certifies EU-only data residency and EU-staffed operations. Read the fine print: the SecNumCloud qualification granted on 31 March 2025 covers OVHcloud’s Bare Metal Pod product line, not the Public Cloud GPU catalogue. On Public Cloud, A100 instances are published at €2.75/hour (~€1,980/month at 730 hours) for A100-180, with higher multi-GPU tiers scaling linearly from there. Bare Metal Pod with SecNumCloud is quoted on request. If your compliance team specifically needs the SecNumCloud wrapper, budget for the Bare Metal Pod path; if ISO 27001 plus EU jurisdiction is enough, the Public Cloud GPUs are the cheaper entry point. SecNumCloud, applied correctly, is the architecture we’d map to SEAL-3.

Scaleway (France, subsidiary of Iliad Group) is ISO 27001:2022 certified with HDS (healthcare data hosting) certification. SecNumCloud qualification is in progress (expected 2026). GPU pricing is on the public pricing page: A100 at roughly €2.50/hour (€1,825/month at 730 hours), H100 SXM at roughly €3.50/hour (€2,555/month). (Checked 21 April 2026; tiers change, so quote the page at the date you buy.)

For most financial services, Hetzner or Scaleway is sufficient. You’re checking two things: (1) is the provider EU-incorporated and operating EU-only datacenters, and (2) does the provider have a recognized compliance certification (ISO 27001 minimum)? Both providers pass. Budget for at least two GPU servers (primary + failover) if you’re handling production workloads.

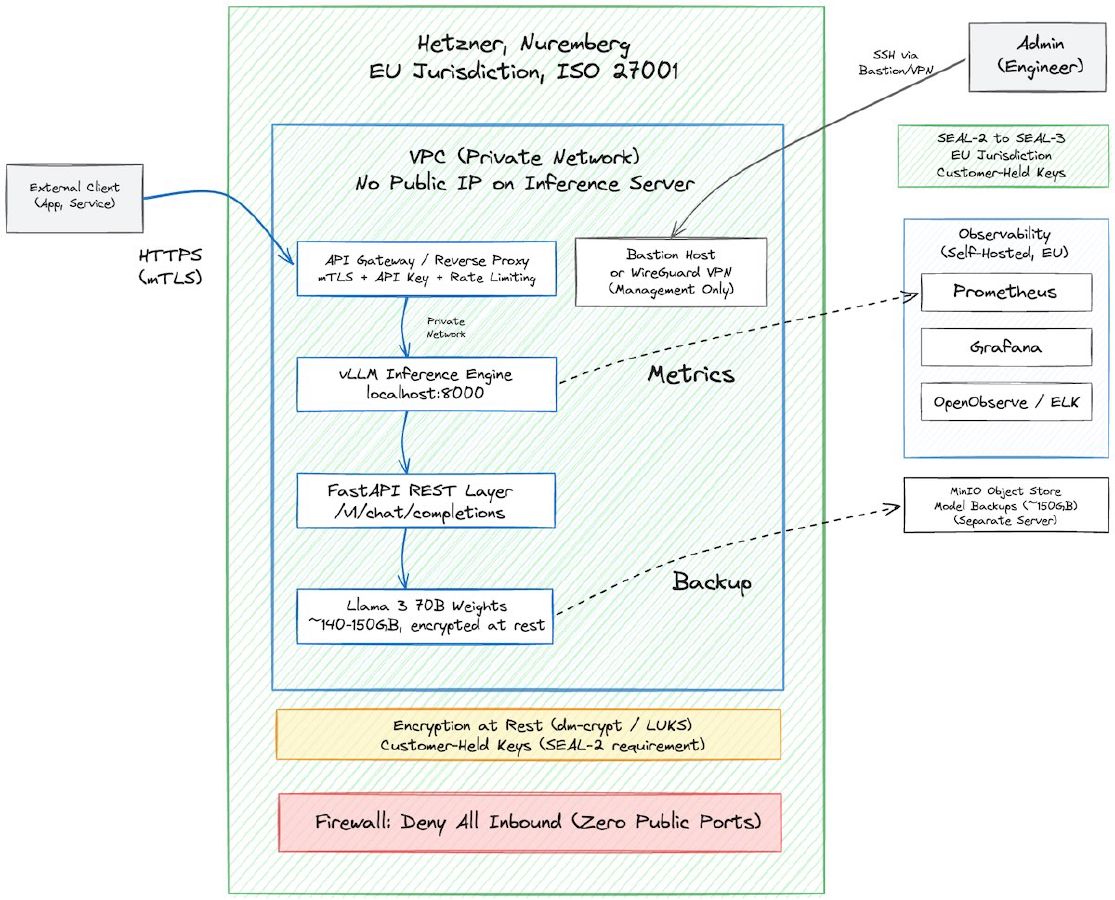

Component 2: Network Isolation. Your inference server doesn’t belong on the public internet. The standard pattern here is simpler than you might expect: keep everything inside the provider’s VPC (Virtual Private Cloud), and expose only what needs to be exposed through a controlled adapter.

Your GPU server runs inference on a private network. No public IP, no open ports, no SSH exposed. The inference API listens on a private address inside the VPC. For applications that need to consume it (your fraud detection service, your transaction classifier), they connect over the private network if they’re colocated, or through a lightweight API gateway or reverse proxy that sits at the VPC boundary with mTLS (mutual TLS), API key authentication, and rate limiting. The gateway is the only component with a public-facing address, and it never touches the model directly. It forwards authenticated requests to the inference server and returns responses. Think of it as a bouncer, not a tunnel.

This is the same pattern you’d use for any sensitive internal service. The inference server is no different from a database or a secrets manager: it doesn’t need to be reachable from the internet, and it shouldn’t be.

For customer-facing workloads (say, a support chatbot powered by your self-hosted model), the pattern holds. The chatbot’s frontend calls your API gateway. The gateway authenticates the request, forwards it to the inference server inside the VPC, and returns the response. The model, the weights, and the transaction data never leave the private network. The adapter is the only surface area.

What about management access? Your team needs to SSH into the server for maintenance, deploy model updates, check logs. Here, you have two options. If your provider offers a VPN or bastion host service (most do), use it. If you need to connect multiple sites or manage infrastructure across providers (say, primary on Hetzner, failover on OVH), a WireGuard-based mesh like NetBird (NetBird GmbH, Berlin, Germany) is worth considering. NetBird is open-source, EU-incorporated, and creates peer-to-peer WireGuard tunnels for admin access without exposing management ports. But for most single-provider setups, the provider’s native VPC isolation is enough. Don’t add a dependency you don’t need.

One sovereignty note on management tools: if you do need a tunnel or zero-trust overlay, pick an EU-incorporated provider. Routing management traffic through a US-jurisdiction service (Cloudflare Tunnels, for example) means a US court can compel access to that traffic under the CLOUD Act. That undermines the sovereignty posture you’ve built. For management access, the same jurisdictional discipline applies as for data.

Component 3: Open-Source AI Models. The models you run are Llama 3 (Meta, published under the Meta Llama 3 Community License), Mistral’s open-weight variants (Mistral 7B, Mixtral 8x7B, Devstral Small are Apache 2.0; the Medium / Large / Codestral lines have their own, more restrictive licences, so check before you pick one), or DeepSeek R1 (MIT). All are fully open-weight, no per-token licensing costs, and runnable on customer infrastructure.

Llama 3 70B is roughly equivalent to GPT-3.5 quality and fits on two A100 GPUs or one H100. Mistral is optimized for inference speed and fits on consumer-grade GPUs. DeepSeek R1 is newer and very capable. All three publish their weights and their licence text, and all are inspectable at inference time. Training-data provenance is a different matter: Meta has not published the full Llama 3 training corpus, Mistral and DeepSeek disclose very little. If your AI-Act evidence pack needs “what data informed this model”, you’ll still need to do that work on top, not just by choosing an open model.

You download the model weights (for Llama 3 70B, about 140 GB at the released BF16 precision that Meta publishes; smaller if you quantize to 8-bit around 70 GB, or 4-bit around 40 GB, for lower-VRAM deployments), store them on your server, and run inference locally. The cost is the compute cost of the GPU and the CPU cycles. There’s no vendor billing, no usage API, no per-token metering.

For financial services applications (fraud classification, transaction tagging, anomaly detection), these models are sufficient. They’re not as good as the latest GPT-5, but they’re good enough for most regulated workloads. And unlike proprietary SaaS models, you own the inference process. You control the data flow. You can audit the model’s behavior.

A Concrete Pattern: The Hybrid Approach

In practice, most financial services that self-host don’t go 100% self-hosted. They hybrid.

Here’s the pattern:

Use self-hosted Llama or Mistral for sensitive workloads (transaction classification on card data, fraud signals on account activity, any AI touching regulated data streams). These run on your infrastructure, end-to-end in your VPC, never leave your EU datacenter.

Use SaaS APIs (OpenAI, Claude, Mistral Cloud) for non-sensitive, high-volume workloads (customer support questions, documentation generation, marketing copy, anything non-regulated). These can scale easily through the SaaS API and cost much less per inference because the API provider has economies of scale you won’t.

The DORA angle here is important: you’ve documented which functions are self-hosted and which are SaaS-dependent. The sensitive ones have an exit strategy (you own the code and the data). The non-sensitive ones don’t need one, or the exit strategy is “we’ll rebuild this on Llama if needed, low priority.” That’s a defensible position in a DORA examination.

Cost impact: if your sensitive workload fits comfortably on mid-tier RTX Ada hardware, a pair of Hetzner GEX130 boxes (2 × €838/month = ~€1,676/month, plus setup and bandwidth) is a defensible primary + failover footprint. If you need A100-class capacity, you’re closer to €1,820/month per server on Hetzner’s GPU cloud, roughly €3,640/month for a pair. SaaS APIs for high-volume non-sensitive work changes the figures by a lot, depending on volume. The exact savings percentage depends on your workload mix, your SaaS contract terms, and how much of your traffic actually needs the A100 tier, so run the math with your own numbers. The direction is consistent: self-hosted inference on EU hardware is meaningfully cheaper per token than enterprise SaaS agreements at steady-state volume, and the compliance posture is stronger.

Implementation Sketch

This is real, testable architecture. Here’s what it looks like to build it.

Week 1-2: Provision and Harden

Rent a bare-metal server from Hetzner or Scaleway. Get a server with at least 8 CPU cores, 64GB RAM, and NVMe SSD storage. Install Linux (Debian or Ubuntu LTS). Configure the server inside the provider’s VPC with no public IP. Set up management access through the provider’s VPN or bastion host, or through a WireGuard tunnel if you need multi-site admin access. Your inference server is now unreachable from the public internet, with management access restricted to authenticated, encrypted channels.

Set up encryption at rest using dm-crypt or LUKS. This is key for SEAL-2 compliance: encryption keys are under your control, not the provider’s. You hold the passphrase.

Week 2-3: Deploy Inference Stack

Download the Llama 3 70B or Mistral model weights. Run continuously, an A100-class GPU is roughly €1,820/month on Hetzner’s GPU cloud (at ~€2.49/hour) and roughly €1,980/month on OVHcloud’s Public Cloud A100-180 (at €2.75/hour). If you need the SecNumCloud wrapper, the equivalent lives on OVHcloud’s Bare Metal Pod line, priced on request and materially more expensive. For production workloads, budget for at least two servers (inference + failover).

Use vLLM or llama.cpp as your inference engine. These are battle-tested, open-source, and production-ready. Configure them to listen on a local port (not exposed to the public).

Wrap the inference engine in a REST API layer (FastAPI, Flask, or similar). Expose a single endpoint: /v1/chat/completions (OpenAI-compatible, so you can swap between SaaS and self-hosted without code changes).

Week 3-4: Integrate Authentication and Observability

Create an API key, hash it, and store the hash in the inference server’s config. All requests must include the API key.

Set up Prometheus and Grafana (both open-source, self-hosted) to monitor inference latency, token throughput, error rates, and cost per request. This is crucial for understanding whether self-hosting makes sense for your workload.

Set up structured logging (JSON format) and store logs in an open-source observability platform (OpenObserve, Jaeger, or ELK). You need audit logs anyway for GDPR; use them to understand model behavior.

Week 4+: Test and Iterate

Run a small subset of your transaction classification through the self-hosted model. Compare output quality and latency against OpenAI. Measure cost per classification (GPU hours divided by transactions processed).

If the self-hosted model is 95%+ as accurate as the SaaS model, and the cost is lower, migrate more workloads. If it’s not hitting your SLA on latency, consider a faster model (Mistral instead of Llama) or rent a faster GPU.

Keep the SaaS API as a fallback. If self-hosted inference is degraded, automatically fail over to the SaaS API. This gives you operational safety without the risk of being completely dependent on SaaS.

This is not a six-month migration. It’s a four-week implementation. The hard part isn’t the technology. It’s the operational discipline: monitoring, alerting, backups, key rotation, and staying current on security patches.

SEAL-Level Mapping: Where Does This Architecture Sit?

Self-hosted on Hetzner or OVH with proper VPC isolation qualifies as SEAL-2 minimum, potentially SEAL-3.

One note on scope. SEAL-2 is a range, not a point. The top of the range is what the architecture above describes: EU-incorporated provider, EU-only datacenter, no public exposure of the inference server, customer-held encryption keys, and an entire data path that avoids US-jurisdiction hops. The bottom of the range is what you get from a US hyperscaler with EU data-residency contract clauses and customer-managed keys, where the legal jurisdiction underneath the service is still US. Both sit inside SEAL-2 as the framework defines it; they do not give you the same Schrems II posture. Knowing which end of the range you’re on matters.

For the self-hosted case in this post, the architecture clears the top of SEAL-2 and can be pushed into SEAL-3 with the right key-management setup. The critical dependency is the data path: if your inference traffic routes through a US-jurisdiction service (a US-based API gateway, a US CDN that terminates TLS, or a US tunnel provider like Cloudflare), the CLOUD Act exposure comes back in through the edge regardless of where the GPU sits. The architecture described here avoids this by keeping everything, inference, management, and monitoring, within the EU provider’s network.

For SEAL-3 (full operational and legal sovereignty), you’d need:

- EU-incorporated provider operating EU-only infrastructure (Hetzner, OVH, Scaleway all qualify)

- Customer-managed encryption keys in an on-premises or EU-hosted HSM (hardware security module)

- Explicit contractual guarantees of EU-only data residency and EU-only staff for critical operations

The self-hosted Hetzner setup reaches SEAL-2. If you layer on an EU HSM for key management, you hit SEAL-3. HSM-as-a-service pricing varies by provider and configuration, but even with that addition the total lands well under full SaaS pricing at enterprise volume.

Compare this to a SaaS approach. OpenAI running in a US region equals SEAL-0 (no sovereignty). OpenAI API with a Data Processing Addendum (DPA) equals SEAL-1 (EU data processing, but US legal jurisdiction still applies). OpenAI API with Enterprise Key Management (EKM, OpenAI’s BYOK for Enterprise/Edu workspaces) plus strict EU contract terms lands at the bottom of SEAL-2: the keys are customer-held, but the infrastructure underneath is a US hyperscaler. That means primarily Microsoft Azure for the stateless APIs under the restructured 2025 OpenAI–Microsoft agreement, with additional training and inference capacity on AWS under OpenAI’s November 2025 deal. CLOUD Act exposure remains. The self-hosted approach sits at the top of SEAL-2 (or SEAL-3 with a proper HSM), and it costs less.

The Operational Reality: Monitoring and Reliability

One catch: self-hosted means you own operational reliability. No SaaS vendor to blame if the model inference is slow or the GPU runs out of memory.

Your observability stack is critical. You need to know: is inference latency degrading? Are token costs trending up (GPU utilization dropping)? Are there inference errors that would indicate data quality issues? Is the model producing bad outputs that need retraining?

Open-source observability (Prometheus, Grafana, OpenObserve) is the way forward here. SaaS observability (Datadog, New Relic) adds cost and creates vendor lock-in again. The metrics and logs from your infrastructure should stay on your infrastructure.

Key metrics to monitor:

- Inference latency (p50, p95, p99)

- Tokens generated per second

- Cost per inference (GPU hours divided by inferences)

- Error rates (failed requests, timeouts, OOM errors)

- Cache hit rates (if using in-memory caching)

If any metric is trending badly, you need to know immediately. A slow inference stack is a revenue problem. A broken inference stack is a DORA incident.

Backup strategy: your model weights are large (about 140 GB at BF16 for Llama 3 70B, less if quantized). You need versioned backups. Store them on a separate storage system (not the same GPU server). Ideally, an object store like MinIO (open-source S3-compatible) on a separate machine. Budget for a dedicated storage server with enough headroom for model versions and inference logs.

If your GPU server fails, you can spin up a replacement in hours (model weights are already backed up, config is version-controlled). That’s your exit strategy for GPU failure. It’s testable and real.

Trade-offs and Caveats

Self-hosted AI is not a universal answer. There are real trade-offs.

Operational burden: You’re responsible for patching, monitoring, security updates, and disaster recovery. If your team is small (under five engineers) or has limited infrastructure experience, self-hosting adds risk. You can mitigate this by outsourcing observability or using managed GPU providers, but at that point, cost savings shrink.

Model capability: Current open-source models (Llama, Mistral) are very good, but they’re not state-of-the-art. For cutting-edge AI (fine-tuned reasoning, complex multimodal tasks), SaaS APIs still have an edge. Evaluate your actual use case. For transaction classification, fraud detection, and customer support? Open-source models are fine. For R&D or novel use cases? Maybe you need SaaS APIs.

Scaling: throughput varies enormously by model, batch size, and GPU class. A single modern GPU can reach a few hundred inferences per second on small models at large batch sizes, and single-digit to low-tens of tokens-per-second per request on Llama-3-70B at interactive latency. Benchmark your own configuration before budgeting capacity. If you need more throughput than one server gives you, you scale horizontally (multiple servers) or use a managed inference provider (which starts to look like SaaS). At that point, cost advantage shrinks.

Regulatory certification: Hetzner and Scaleway are not SecNumCloud certified (yet). If your regulator specifically requires SecNumCloud or a government-recognized certification, you’re limited to OVHcloud. Check with your competent authority before assuming Hetzner or Scaleway are sufficient.

The honest take: self-hosted is right for financial services firms with (1) moderate AI workloads (millions of inferences per month, not billions), (2) tight compliance requirements (DORA, GDPR, SEAL-2+ target), and (3) engineering teams capable of running and monitoring production infrastructure. It’s not right for firms that are operationally lean or have no in-house infrastructure expertise.

Next Steps

If this pattern is relevant to your situation, here’s what to do first.

Audit your current AI dependencies. Which SaaS AI vendors are you using? Which of those are supporting critical functions (defined by DORA as functions that, if disrupted, would significantly impair your firm’s critical operations)? For each critical dependency, assess: have you tested an exit? Do you have a documented alternative? How long would migration take?

Evaluate your workloads. Not all AI needs self-hosting. Identify which workloads (fraud detection? transaction classification?) could run on open-source models. Which require state-of-the-art models and genuinely need SaaS? This determines your hybrid split.

Assess infrastructure readiness. Do you have an engineer (or team) capable of provisioning, monitoring, and patching a production server? If not, self-hosting is premature. Build that capability first, or use a managed GPU provider to reduce the operational load.

Prototype before committing. Rent a Hetzner GPU server for two weeks. Download Llama 3, run inference on your test data, and measure latency and accuracy. The total cost is under €1,000. You’ll have concrete data for the business case, and you’ll know whether your workload profile justifies the full build-out.

Run a DORA assessment with your compliance team. Show them your proposed architecture. Ask: for self-hosted workloads, does this meet the exit strategy requirement? For hybrid workloads, how do we document the risk? Your compliance team’s answer will tell you whether this is a viable path.

The regulatory landscape is shifting. Self-hosted is no longer an extreme option. It’s becoming the practical choice for regulated financial services that want to own their dependencies.