On 26 March 2026 the Italian data protection authority (the Garante per la Protezione dei Dati Personali, Italy’s national DPA) fined Intesa Sanpaolo €31.8 million. Two weeks earlier, on 12 March, the same regulator had already fined the same bank €17,628,000 for a separate profiling issue tied to the carve-out of its digital subsidiary Isybank. Inside a fortnight, one European bank had cost itself €49.4 million in GDPR (General Data Protection Regulation) penalties.

The profiling case is interesting. The breach case is the one every CISO, DPO, and cloud architect in EU financial services should read with a pen in hand. Not because of the number. Because of the two numbers.

9 affected data subjects, per Intesa’s initial breach notification. 3,573 affected data subjects, per the Garante’s own investigation.

That gap is the article.

What actually happened

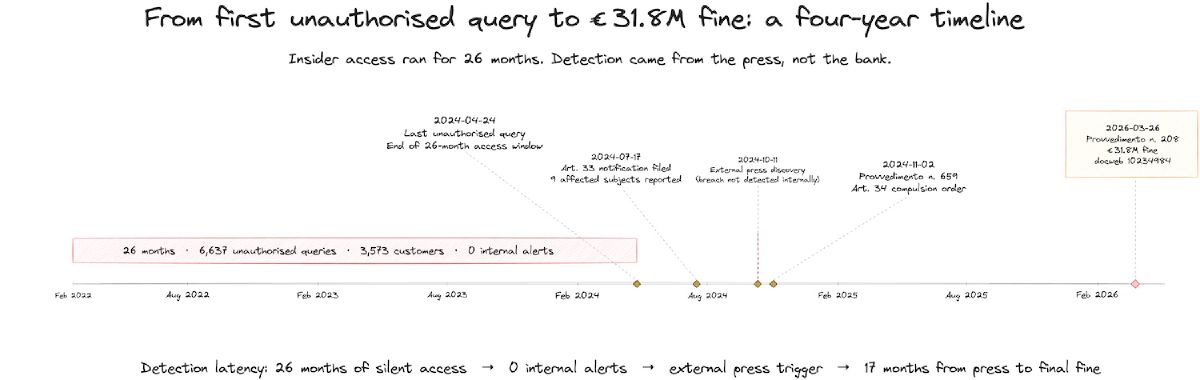

A branch employee at the Bisceglie/Barletta agribusiness office executed 6,637 unauthorised look-ups on the records of 3,573 distinct Intesa Sanpaolo customers between 21 February 2022 and 24 April 2024. Twenty-six months. The records queried included 34 national politicians (Italian press reported the list), 43 entertainment and sports personalities, 73 bank employees, and around 3,422 customers from the employee’s personal and professional sphere, roughly 2,450 of them concentrated in a single province.

None of this triggered an internal alert. The breach surfaced through national press coverage in October 2024. Only then did the Garante open an own-motion investigation, which is where the “9 affected” figure became the “3,573 affected” figure.

Provvedimento n. 208 of 26 March 2026 (doc. web 10234984, if you want to read the original) invokes GDPR Articles 5(1)(f) on integrity and confidentiality, Article 5(2) on accountability, Article 24 on controller responsibility, Article 32 on security of processing, and the breach-notification chain under Articles 33 and 34. The criminal corollary, Italian Penal Code Article 615-ter (unauthorised access to a computer system), applies because Italian courts have held that an authorised user who exceeds their scope of authorisation commits the offence, not just an outside intruder.

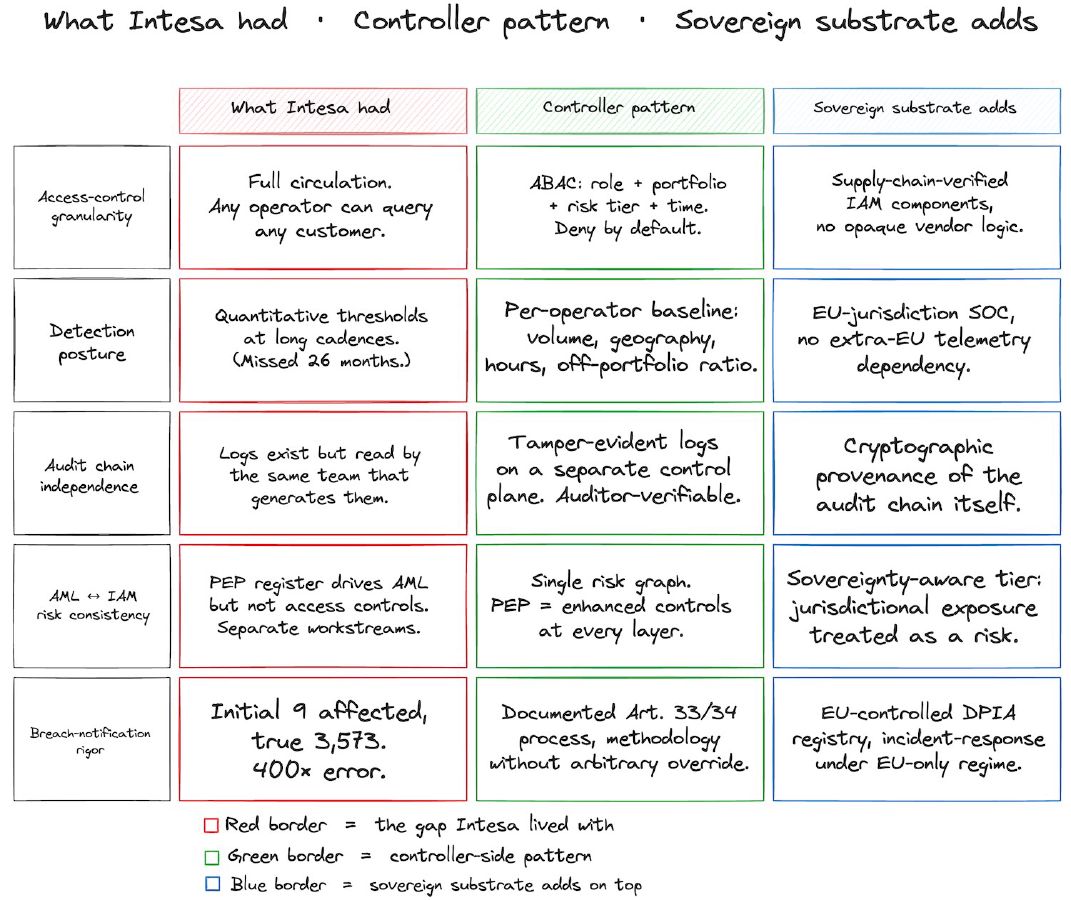

The Provvedimento describes the bank’s access model as “piena circolarità”: any operator could query any customer in the entire Intesa Sanpaolo customer base, regardless of whether that customer was assigned to the operator’s branch or portfolio. The Garante was careful not to censure this organisational choice per se. What the Garante censured was the absence of controls proportionate to the breadth of access granted.

How detection failed for 26 months

The bank had monitoring. The Garante’s account, filtered through the legal analyses from cybersecurity360.it and globalcom.it, is that the monitoring relied on quantitative thresholds at long temporal cadences. Translation: alerts fired when an operator made too many look-ups in a given window. The insider’s pattern sat below those thresholds for 26 months.

That is the failure mode. Not “no logging.” Not “no security.” The bank had logs, had thresholds, had a formal ENISA-methodology risk assessment on the books. What it didn’t have was anomaly detection that asked a different question. Not how many records did this operator touch. Which records, compared to their portfolio. Where are those customers, compared to the operator’s usual geographic footprint. When is the operator accessing them, compared to their normal working pattern.

User and Entity Behaviour Analytics (UEBA, a standard label in the detection market) is the commodity answer to that problem. A baseline per operator: typical volume, typical geographic spread of queried customers, typical hours, typical off-portfolio ratio. Anomalies are deviations from the baseline, not absolute counts. Intesa’s threshold model failed precisely because the insider was disciplined enough to stay within absolute limits.

The second thing that didn’t happen: the bank applies enhanced customer due diligence (CDD) to Politically Exposed Persons (PEPs) under Italian anti-money-laundering law (Decreto Legislativo 231/2007). That PEP register exists. It drives AML monitoring. It did not drive access controls on the same individuals’ data. An operator querying a politician’s account got the same friction as an operator querying their neighbour’s account, which is to say none.

The 9-vs-3,573 gap and the ENISA override

Two further findings in the Provvedimento deserve their own paragraphs.

First, the notification gap. Intesa’s initial Article 33 notification to the Garante, filed on 17 July 2024, declared nine affected subjects. The Garante’s investigation, which again started because of press coverage, found 3,573. That is an error rate of roughly 400×. The communication to data subjects under Article 34 only happened after the Garante issued a separate order (Provvedimento n. 659 of 2 November 2024) compelling it.

Second, the methodology override. Intesa’s risk assessment cited the ENISA methodology for personal data breach severity. In applying it, the bank manually downgraded the severity based on the cause of the incident (a single dishonest employee) rather than the impact on data subjects. The European Data Protection Board Guidelines 9/2022 on breach notification require the assessment to focus on impact. The Garante treated the override as window-dressing inconsistent with the methodology the bank claimed to follow.

In isolation, either finding would be awkward. Together they paint a specific picture. Accountability as a document, not as a practice. The methodology is in the binder. The controls that would have made the methodology substantive weren’t on the estate.

Why this reaches your cloud architecture

Intesa Sanpaolo is a large Italian retail bank. You may be a German insurer on a managed private cloud, a Dutch neobank on AWS, or an Irish payments platform on a mixed stack. The reason the lesson crosses jurisdictions and architectures is that the failure mode is not Italian and is not on-premises. It is architectural.

Any environment in which:

- a large operator population has read access to sensitive customer data,

- access decisions are made at login or session grant (not at query-time),

- monitoring is framed as quantitative thresholds on logs,

- and AML/CDD risk classifications exist in parallel to, but not inside, the access-control policy,

will produce the same failure mode, given a patient insider. The stack does not matter. The pattern does.

Sovereign cloud providers (SecNumCloud, C5, EUCS-aligned offerings) give you the infrastructure basis for controlling access and producing auditable telemetry. They don’t do the access-control design for you.

Five architectural controls that would have raised the floor

No heroics. These are patterns, not products. Reasonable teams can implement them on a SecNumCloud tenancy, on a hardened private cloud, or on a hyperscaler with the right architectural discipline.

1. Attribute-Based Access Control (ABAC) with context. Role-based access is necessary and insufficient. An operator’s role grants the shape of access. The instance of access, this customer, this field, right now, should be resolved at query time against a policy that considers the operator’s assigned portfolio, the customer’s risk tier (PEP, bank employee, sensitive flag), the branch and working hours of the operator, and whether a contractual relationship exists. Deny by default. Out-of-portfolio access requires a typed justification and a supervisor notification, logged as part of the query.

2. Single risk graph between AML/CDD and access control. The PEP register the bank maintains for customer due diligence should feed the access-control policy. One risk model. A customer flagged as high-risk for AML purposes gets enhanced controls for access-control purposes. Today many banks treat these as separate workstreams owned by separate teams, which is how AML sees a PEP and the IAM layer sees a customer number.

3. Behavioural baselining, not threshold monitoring. Per-operator baseline of query volume, customer geography, working hours, off-portfolio ratio. Alert on deviation, not on absolute count. Modern UEBA products do this out of the box; the work is in feeding them the right context (portfolio assignments, risk tiers, working patterns) and in actually acting on the alerts. An alert that ends up in an unread queue is the same as no alert, which is what Intesa effectively had.

4. Just-in-time data masking. Default to masked personally identifiable information (PII) at the query layer. Full unmasking requires an explicit justification tied to a contractual operation or documented business need. Reduces the blast radius when unauthorised access happens, because it always will eventually. Intesa’s breach was damaging partly because the operator could see everything once inside the record.

5. Tamper-evident audit logs on a separate control plane. Access logs should feed a pipeline that the bank’s compliance function (and, in principle, an auditor or regulator during an inspection) can independently verify, with cryptographic integrity protections. Logs controlled entirely by the operational team that performs the access is a pattern that collapses under adversarial conditions. The substance of auditability isn’t that logs exist; it’s that the logs are independently verifiable.

Where this lands on SEAL

The Intesa estate was, on the regulatory paper, a sovereign architecture. Italian bank, Italian data, Italian regulator, Italian legal regime. That is a legitimate sovereignty posture, and it is also beside the point of this breach.

SEAL rates the sovereignty of the substrate: what jurisdiction your cloud runs in, who holds your keys, whether your operational telemetry leaves the EU. Those are provider-side questions. They matter a lot for workloads where the adversary is a foreign subpoena. They did not protect Intesa against a patient insider on an Italian perimeter.

Making operational control provable at the controller level is a separate discipline. ABAC with portfolio context, behavioural baselines, a single risk graph, tamper-evident audit on a separate control plane: those are controller-side patterns, and they are the substance of this article. A sovereign substrate makes them achievable. The substrate does not do them for you. Both matter. Neither substitutes for the other.

State doctrine, bank CISO leadership, and sovereignty certifications do not change architectures. Controls do.

A European fintech scenario

Picture a mid-sized European fintech, say a retail lender of two million customers on a mostly EU-sovereign stack. The platform has roughly 1,400 operators: branch staff, call-centre agents, risk analysts, compliance staff. Default access grants read rights on the customer base to anyone in a customer-facing role, subject to a quarterly “access review” that is a checkbox exercise.

Monitoring is: Elastic SIEM (Security Information and Event Management platform) fed by application logs, a set of saved searches that alert on high-volume access, and a weekly report that someone in the security team skims. ENISA methodology is on the risk register. UEBA is on the roadmap for next year.

Now add a disgruntled call-centre agent who wants to watch a local politician’s account. Or a compliance analyst who leaks to the press. Or a risk analyst who runs a personal research project. None of them will trip the threshold. The agent runs thirty queries a day, no more than any peer. The compliance analyst is supposed to query PEPs. The risk analyst has a job title that makes aggregate queries look normal.

In two years of that pattern, this fintech is in the same place Intesa was. The difference between €49 million and €0 is the five patterns above and whether the team owns them before anyone calls the press.

What to do in the next 30 days

Three concrete moves.

First, inventory your access model. Pull the list of operator groups with read access to customer records. For each group, ask: is the grant at the role level, or is it resolved per query with portfolio context? If the answer is “at the role level,” the architectural gap is in your access model, not in your sovereignty posture, and it needs a design pass.

Second, look at what your monitoring actually alerts on. If the alerts are quantitative thresholds against volume, you are in Intesa’s position. If the alerts are per-operator deviations from a baseline that includes portfolio context, geographic footprint, and working hours, you are closer to a behavioural-detection posture. In my experience most shops run a mix: baselines on some operator classes, thresholds on the rest. Document where the gaps are.

Third, run the notification tabletop. Not the one where you practice filing an Article 33 notification quickly. The one where you practice filing one accurately. Rehearse the under-reporting failure mode. If your first draft would be “nine customers,” ask how you would know.

None of this requires a platform migration. All of it requires architectural discipline the week before anyone is watching, rather than the week after.

The €31.8 million fine is the headline. The 9-vs-3,573 gap is the engineering lesson. Teams that absorb the second don’t have to pay the first.