The Problem: Static Keys in a Compliance-First World

A European fintech is migrating its data lakehouse from AWS to an EU sovereign cloud provider. The architecture is sound: Apache Iceberg tables, multi-engine support, fine-grained governance. But one detail derails the security team: how do query engines access Iceberg tables without holding long-lived credentials?

The legacy approach (issuing static API keys to each data pipeline) creates a compliance nightmare:

- Audit chaos: Static keys don’t reveal who accessed what table or when. Permissions are coarse-grained (S3 bucket level, not table level).

- Key sprawl: Every pipeline, every test environment, every contractor gets a key. Revoking a compromised key means finding all references in configuration files, restart cycles, and hoping you didn’t miss any.

- DORA friction: DORA, the EU’s Digital Operational Resilience Act (applicable from 17 January 2025), mandates encryption, cryptographic key lifecycle management, and role-based access controls with audit trails. Static keys don’t fit this model.

Apache Polaris solves this by replacing static key distribution with catalog-vended credentials: short-lived, scoped credentials generated on-demand for each access request. Here’s how it works, and why it matters for EU sovereign migrations.

How Polaris Vends Credentials (The Mechanism)

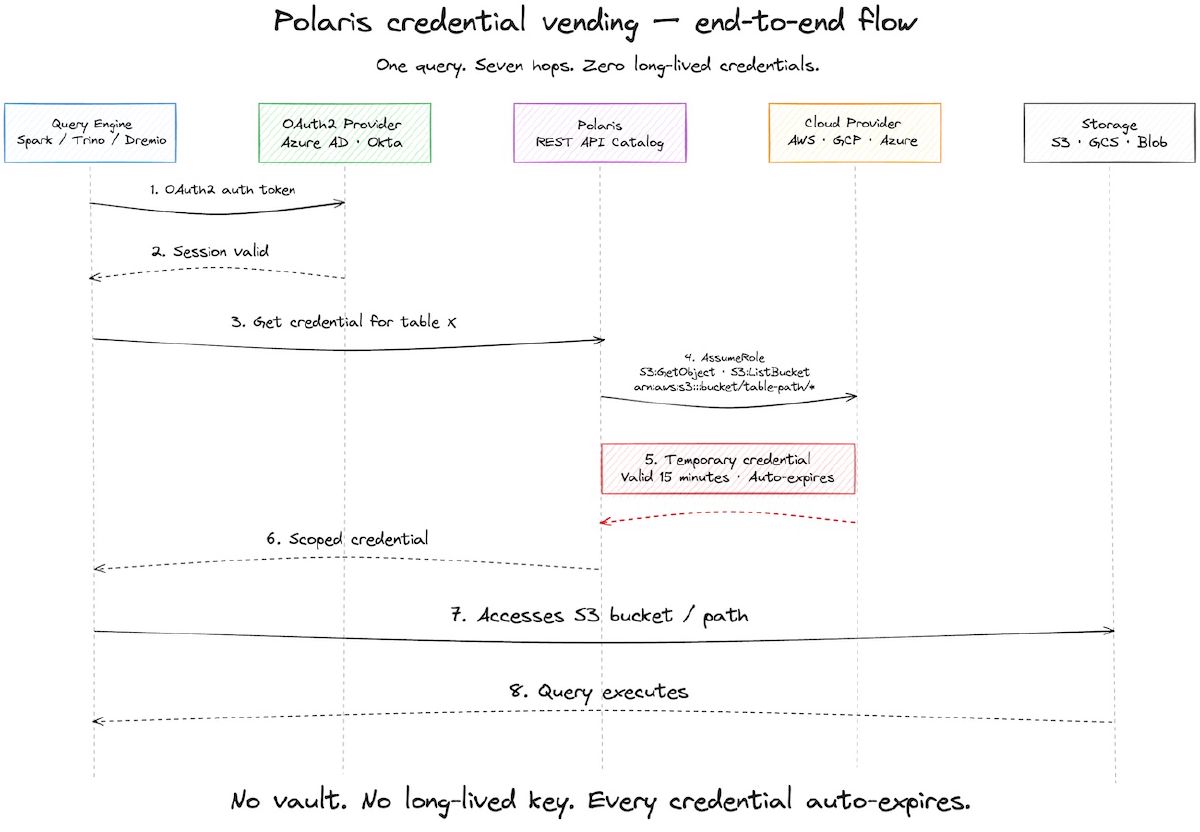

When a query engine like Spark requests access to an Iceberg table via Polaris, the following happens:

1. Authentication: The query engine authenticates to Polaris using OAuth2 (standard in modern cloud platforms).

2. Credential Request: The engine asks Polaris for access to a specific table and its associated data location (e.g., an S3 bucket path).

3. Polaris Assumes a Role: Polaris holds a service account with permissions to assume roles on your cloud provider. It calls the provider’s credentials API:

- AWS: Calls STS AssumeRole with a short session duration (15 minutes by default) and an inline policy restricting access to the specific S3 path

- Google Cloud: Issues scoped (vended) tokens for listing, reading, and writing against the target GCS path. The Polaris 1.3.0 GCS guide confirms the vended-token model but does not publish an exact credential type or default lifetime, so assume short-lived and check your release if you depend on a specific TTL.

- Azure: Issues a scoped credential (SAS token or managed identity token, depending on configuration) for the target container/path. Polaris docs list Azure Blob as a supported credential-vending backend; published defaults for the exact lifetime are not available, so confirm against your release configuration.

4. Return Scoped Credentials: Polaris returns these credentials directly to the query engine.

5. Automatic Expiration: Once the short-lived credential expires (15 minutes by default on AWS), it is automatically invalid. No manual revocation needed. No cleanup. No key sprawl.

This is a different model from traditional catalog systems like Hive Metastore, which assume the query engine already has cloud credentials and just need metadata about table structure.

Why This Matters: Sovereignty and Compliance

DORA and Key Management Systems

DORA Article 9 (“Protection and prevention”) and its supporting Regulatory Technical Standards require EU financial entities to implement, in summary:

- Encryption and cryptographic controls: encryption of data at rest, in transit, and in use where appropriate, plus full cryptographic key lifecycle management (generation, renewal, storage, backup, transmission, revocation, destruction).

- Strong authentication and access controls: strict, role-based access controls protecting cryptographic keys and critical assets from unauthorized access or modification.

- Logging and auditability: ICT systems supporting critical functions must maintain the authenticity, integrity, and confidentiality of data and support forensic review.

Polaris credential vending aligns directly:

- No static keys in configuration: Credentials are generated at request time, never stored in config files or vault systems.

- Fine-grained scoping: Credentials are scoped to a specific table path and a short window (15 minutes by default on AWS), not a broad S3 bucket.

- Complete audit attribution: Polaris logs every credential issuance with the requesting principal, the resource, and the timestamp.

A European fintech auditor can now answer: “Who accessed the customer-data table on March 15 at 10:15 UTC?” Not “Who has the key to the bucket?”

SEAL Levels: Visibility → Sovereignty

The SEAL framework maps credential management to sovereignty maturity:

- SEAL Level 1 (Visibility): Basic logging of API calls to tables. Polaris provides this via REST API audit logs.

- SEAL Level 2 (Control): Role-based access enforcement. Polaris RBAC lets you assign permissions per namespace or table, and revoke instantly.

- SEAL Level 3 (Compliance): Short-lived credentials with automatic expiration. Polaris’s short-lived (15-minute default on AWS) tokens + automatic expiration meet this bar.

- SEAL Level 4 (Sovereignty): Credentials controlled by your infrastructure, audit logs in EU data centers, encryption keys never leaving your control.

To reach SEAL Level 4, combine Polaris (credential vending) with:

- EU sovereign cloud provider (OVHcloud, Scaleway, etc.) for storage and KMS

- Polaris deployed in your EU region (not delegating to US-based service)

- Audit log export to EU-resident SIEM/log platform

- RBAC integrated with your EU identity provider (e.g., Azure AD with an EU tenant, Okta with EU data residency)

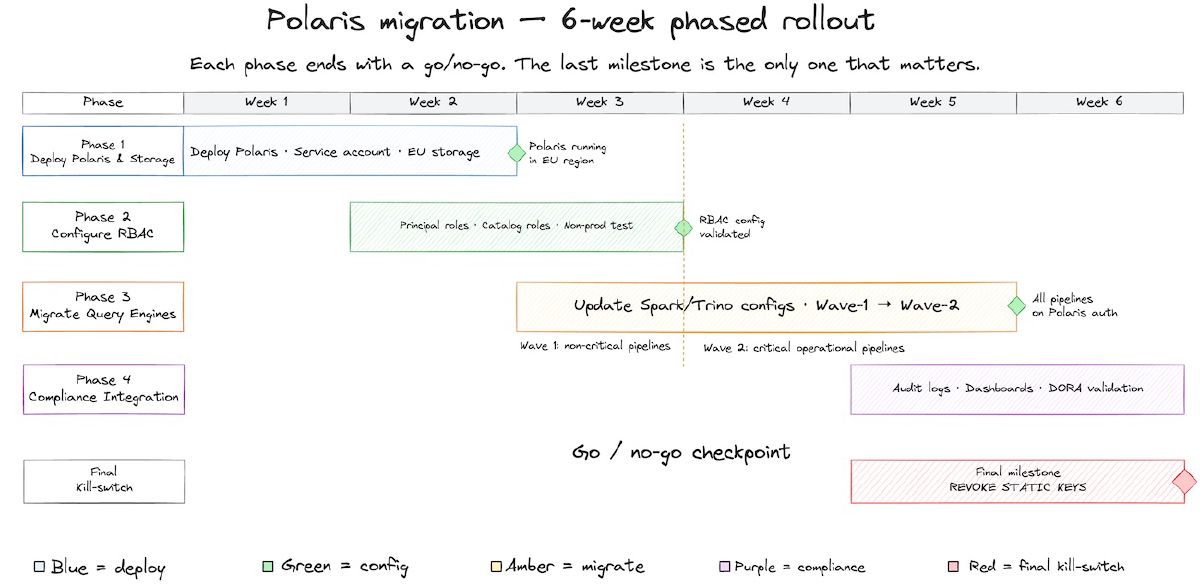

The Migration Path: From Static Keys to Polaris

Here’s the step-by-step pattern for a European fintech migrating from static key management:

Phase 1: Set Up Polaris and Storage

Step 1.1: Deploy Polaris (OSS or managed) in your EU region. Polaris is lightweight. It’s a REST API catalog, not a data warehouse.

Step 1.2: Create a Polaris service account with permissions to assume roles on your EU cloud provider:

- OVHcloud/Scaleway: Create an IAM service account and grant it “AssumeRole” permissions on a custom role.

- AWS EU (Ireland/Frankfurt): Create an IAM role and trust policy for Polaris to assume it.

- Azure EU: Create a managed identity for Polaris with contributor access to your Key Vault and storage account.

Step 1.3: Set up storage (S3-compatible, GCS, or Azure Blob) in your EU region. Configure storage access policies to be assumable only by the Polaris service account, not by individual pipeline services.

Step 1.4: Ingest existing Iceberg tables into Polaris. Polaris uses the Iceberg REST API; it doesn’t own the data, just the metadata catalog.

Phase 2: Configure RBAC and Credential Scoping

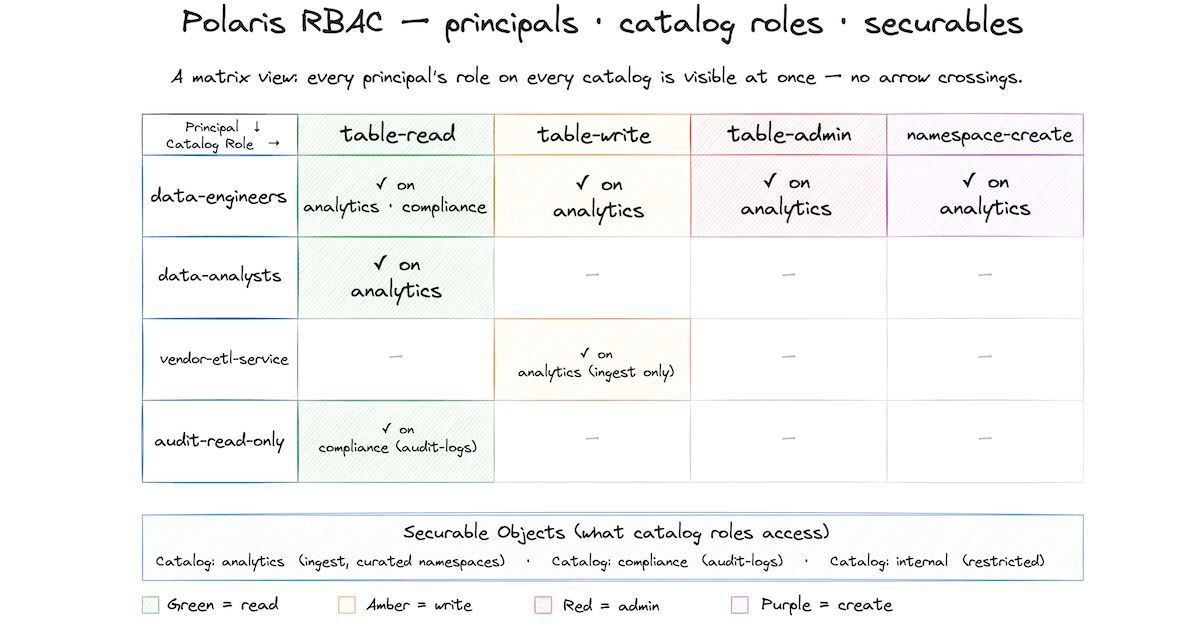

Step 2.1: Define principal roles in Polaris:

- data-engineers (internal team)

- data-analysts (internal team)

- vendor-etl-service (third-party data pipeline)

- audit-read-only (compliance team)

Step 2.2: Define catalog roles with granular permissions:

- table-read (SELECT on specific tables)

- table-write (INSERT, UPDATE, DELETE on specific tables)

- table-admin (ALTER, DROP on specific tables)

- namespace-create (CREATE TABLE within a namespace)

Step 2.3: Assign principal roles to catalog roles per table. Example:

data-engineers → table-admin on namespace 'analytics'

vendor-etl-service → table-write on table 'analytics.ingest_transactions'

audit-read-only → table-read on namespace 'compliance'

Step 2.4: Enable Polaris’s event persistence (introduced in 1.2.0-incubating, currently a preview feature with a schema subject to change between releases) to log catalog events. Export logs daily to your EU SIEM or data lake.

Phase 3: Migrate Query Engines

Step 3.1: Update your Spark, Trino, and Dremio configurations to authenticate against Polaris instead of cloud storage directly:

# Spark configuration

spark.sql.catalog.polaris = org.apache.iceberg.spark.SparkCatalog

spark.sql.catalog.polaris.type = rest

spark.sql.catalog.polaris.uri = https://your-polaris-instance.eu/

spark.sql.catalog.polaris.credential = oauth2

spark.sql.catalog.polaris.token = <your-service-principal-token>

Query engines no longer need static cloud credentials. They authenticate to Polaris (OAuth2), and Polaris hands them scoped credentials.

Step 3.2: Update data pipeline configurations. Remove static S3/GCS credentials. Point to Polaris instead. Test with one non-critical pipeline first.

Step 3.3: Once all pipelines migrate to Polaris authentication, revoke the static service account keys and delete them. This is your security moment: no more credential sprawl.

Phase 4: Audit and Compliance Integration

Step 4.1: Enable audit log export from Polaris to your EU-resident log platform (e.g., ELK stack, Splunk EU, or a data lake in your EU cloud).

Step 4.2: Create compliance dashboards:

- “Access events per principal per table, per week”

- “Privilege escalations or unusual access patterns”

- “Failed authentication attempts”

- “Credential issuances and expirations”

Step 4.3: Validate DORA compliance:

- Audit trail completeness: Yes, all access logged with principal, table, timestamp, and result.

- Key management: Yes, Polaris assumes short-lived roles; no static keys.

- Role-based access: Yes, Polaris RBAC enforced at table level.

- Incident response: Yes, you can instantly revoke principal roles (no key rotation cycle needed).

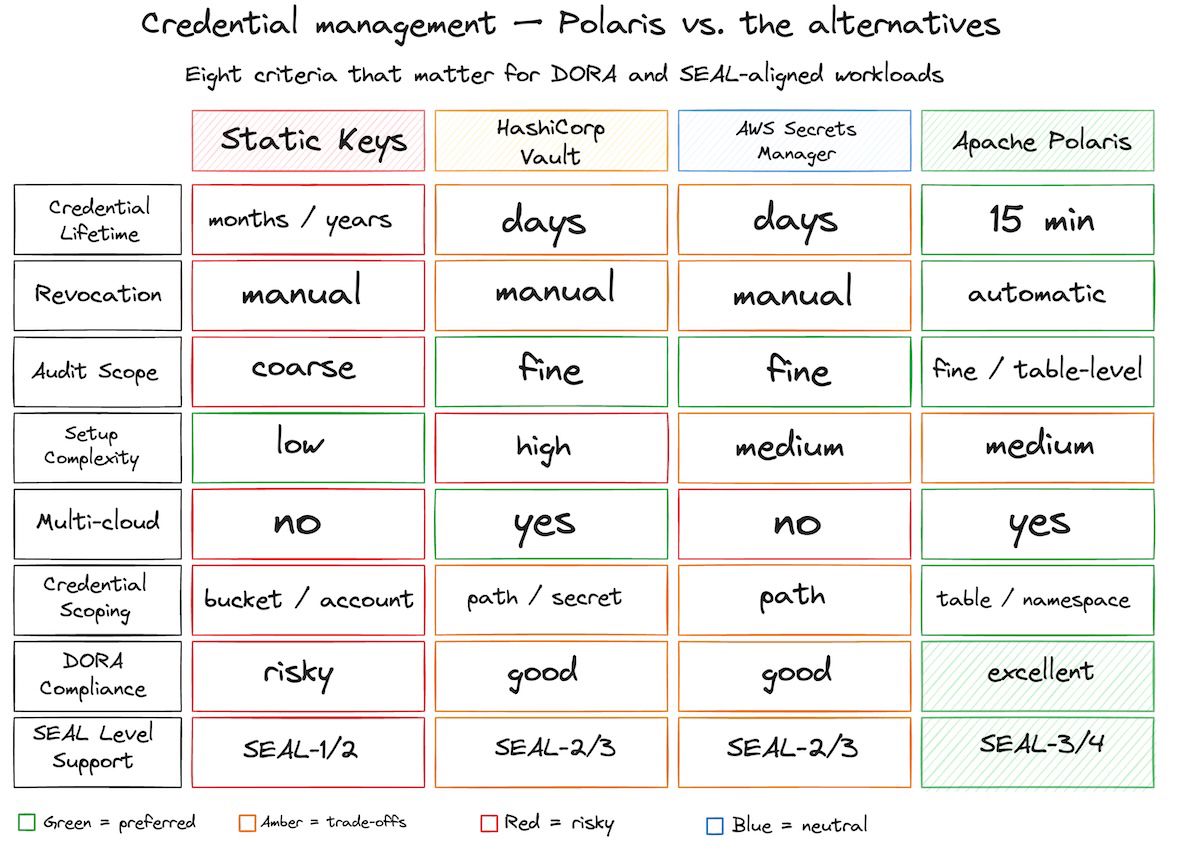

Polaris vs. Traditional Approaches: A Comparison

| Aspect | Static Keys | HashiCorp Vault | AWS Secrets Manager | Apache Polaris |

|---|---|---|---|---|

| Credential lifetime | Months/years | Days (configurable) | Days (configurable) | 15 minutes |

| Revocation | Manual, tedious | Manual, fast | Manual, fast | Automatic |

| Audit trail | Coarse (bucket level) | Fine (per secret) | Fine (per secret) | Fine (per table) |

| Setup complexity | Low | High (self-hosted) | Medium (AWS only) | Medium (REST API) |

| Multi-cloud | No | Yes | No | Yes |

| Scoping | Bucket/account | Path/secret | Path | Table/namespace |

| DORA compliance | Risky | Good | Good (US jurisdiction) | Excellent |

| SEAL Level support | Level 1-2 | Level 2-3 | Level 2-3 (US risk) | Level 3-4 |

| Data gravity | Low | Medium | High (AWS) | Low |

When to use Polaris: You have Iceberg tables, need fine-grained governance per table, require DORA compliance, and operate in or migrate to EU sovereign clouds.

When Vault/Secrets Manager are better: You need to manage non-database secrets (API keys, certificates, database passwords), operate across heterogeneous systems, or already have vendor lock-in.

Real-World Integration: Multi-Tenant Data Lakehouse

A European fintech hosts a multi-tenant data lakehouse where customer tenants can query their data via Polaris:

- Customer A logs in via federated identity (Azure AD EU). Polaris OAuth2 flow validates the login.

- Customer A is a principal in Polaris assigned to the

customer-aprincipal role. - This role is granted

table-readon thecustomer-a-datanamespace. - Customer A’s Spark job requests a table. Polaris generates a short-lived (15-minute default), scoped AWS credential for the

customer-a-data/transactionstable. - Spark runs the query against S3, using the scoped credential. The query completes in 2 minutes.

- After 15 minutes, the credential expires automatically. Customer A’s notebook can’t run queries without re-authenticating.

- Audit log entry:

2026-04-09T10:15:32Z | principal=customer-a | action=credential_issued | resource=customer-a-data/transactions | duration=15m | result=success

This architecture satisfies DORA requirements:

- ✅ Encryption (Polaris does not handle data encryption directly; it assumes storage-level encryption such as S3 SSE-S3 / SSE-KMS and TLS in transit)

- ✅ Role-based access control (enforced per principal role and catalog role)

- ✅ Audit trails (all access logged, attributed to principal, with fine-grained resource scoping)

- ✅ Incident response (revoke a principal role instantly; all future credential requests fail)

Implementation Checklist

Pre-Migration

- Assess current credential management system (static keys, Vault, Secrets Manager)

- Audit all service principals and their current privileges

- Identify all Iceberg tables and their access patterns

- Choose EU cloud provider (OVHcloud, Scaleway, AWS EU regions, Azure EU regions, Google Cloud EU regions)

- Allocate budget for Polaris (OSS, managed Dremio Cloud, or vendor support)

Deployment

- Deploy Polaris in your EU region (containerized or managed service)

- Create Polaris service account with cloud provider role-assumption permissions

- Configure storage (S3-compatible, GCS, or Azure Blob) in your EU region

- Ingest existing Iceberg tables into Polaris metadata catalog

- Enable OAuth2 provider (Okta, Azure AD, Auth0) and integrate with Polaris

Access Control Setup

- Define principal roles (data-engineers, vendors, read-only, etc.)

- Define catalog roles (table-read, table-write, table-admin, namespace-create)

- Assign principal roles to catalog roles per namespace/table

- Test RBAC with a non-production query engine (Spark, Trino, etc.)

Migration

- Update Spark, Trino, and Dremio configurations to use Polaris catalog

- Migrate one non-critical pipeline first; verify credential vending works

- Migrate remaining pipelines in phases (analytics, operational, external)

- Monitor audit logs during migration for anomalies

- Revoke static service account keys once all pipelines are migrated

Compliance

- Configure audit log export to EU-resident SIEM or data lake

- Build compliance dashboards (access per principal, privilege changes, incidents)

- Document Polaris RBAC configuration in your Data Governance Policy

- Schedule quarterly access reviews (Polaris audit logs make this trivial)

- Test incident response: revoke a principal role and verify access is denied

Next Steps

Polaris is production-ready today. It graduated from the Apache Incubator to an Apache Software Foundation Top-Level Project on 18 February 2026, with active contributions from Snowflake, Dremio, and a broad community.

If you’re migrating a data lakehouse to EU sovereign infrastructure and need to close the credential management gap, Polaris credential vending gets you to SEAL Level 3-4 compliance with less operational overhead than Vault or legacy key rotation.

Start with a pilot: deploy Polaris in your EU region, ingest 2-3 Iceberg tables, and test credential vending with one Spark cluster. You’ll see immediately how much simpler the security story becomes.

The fintech we mentioned at the start? They migrated to Polaris in 6 weeks. Audit team approved. DORA ready. Static keys deleted.