On 13 April 2026, ANSSI (Agence nationale de la sécurité des systèmes d’information, France’s national cybersecurity authority) published bulletin CERTFR-2026-ACT-016, titled Vulnérabilités et risques des produits d’automatisation par IA agentique sur les postes de travail. It names two products by brand (OpenClaw and Claude Cowork), catalogues five risk classes, and contains one sentence that should freeze any RSSI (Responsable de la Sécurité des Systèmes d’Information, the French equivalent of a Chief Information Security Officer, or CISO) reading it on a Monday morning: these tools must not be deployed in production while still in beta, and must be strictly limited to isolated sandboxes without sensitive data.

A national CERT telling regulated entities, in writing, that the architecture of agentic desktop assistants as currently shipped is not fit for production. Five weeks earlier, China’s CNCERT (the National Internet Emergency Center) had said roughly the same about OpenClaw, with its default configuration described in CGTN and The Register’s reporting of the alert as “extremely fragile.” Two CERTs on two continents converging within a quarter is unusual.

What CERT-FR Actually Said

ANSSI’s five risks, in operator terms:

- The agent’s runtime is beta software with documented critical CVEs (Common Vulnerabilities and Exposures). Compromising the agent compromises the workstation.

- Sensitive data exfiltrates to “unmanaged external resources”: third-party model APIs, plugin marketplaces, log endpoints, telemetry buckets, none enterprise-vetted.

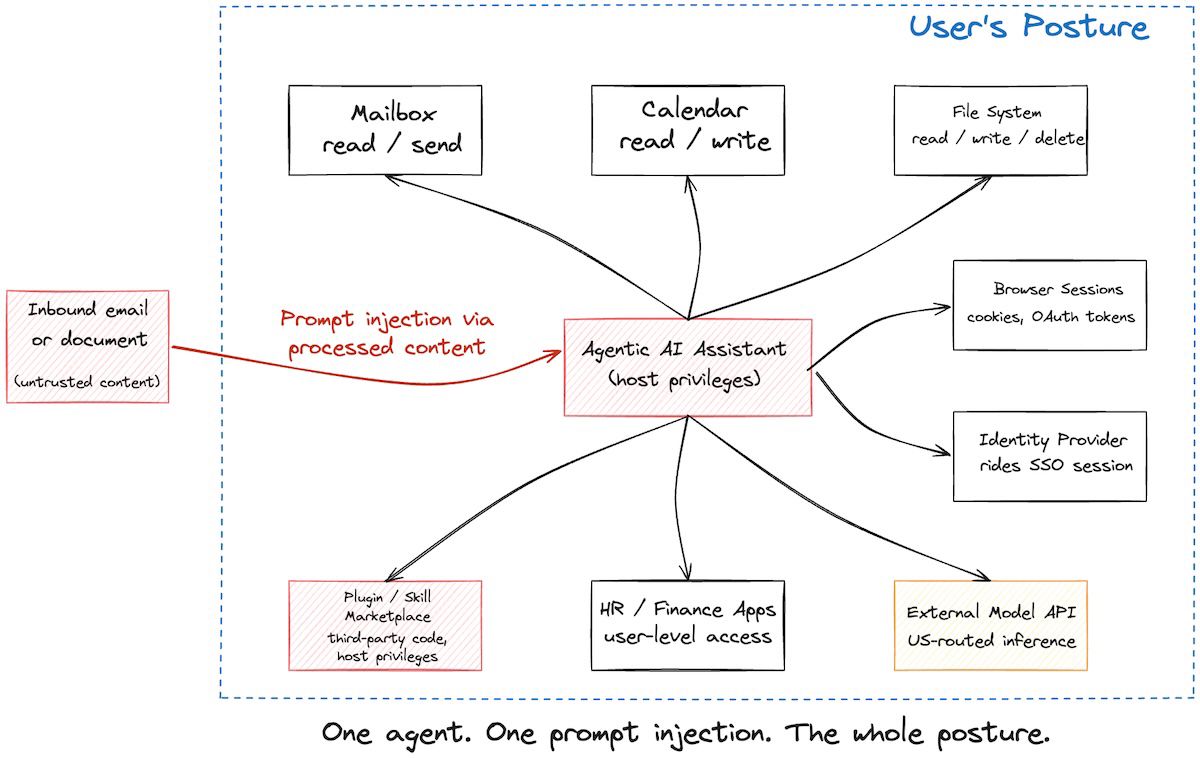

- The agent runs with the rights of whoever is logged in: mailbox, calendar, file shares, HR portal, business apps.

- Credentials, API keys, OAuth tokens leak to the agent. Once it holds them, they are effectively shared with the model provider, the plugin author, and any prompt-injection payload that reaches it.

- Loss of control. The agent does something destructive (deletes a folder, sends an email, executes a shell command) and either the user did not authorise it or the agent escalated scope mid-task.

CERT-FR adds a residual-risk note doing quiet work: even with hardening, the agent can autonomously expand its own capabilities and bypass initial authorisation rules. That is a structural objection, not a maturity one. You cannot configure your way out of “the system grants itself new permissions while it works.”

The deployment guidance is short. Sandbox only. No sensitive data. No production. ANSSI is not asking you to harden these tools. They are asking you to keep them out of production until the architecture changes.

Why the Risks Are Technically Real

Most regulator advisories age badly because the threat model is theoretical. This one doesn’t. Every risk class maps to named, in-the-wild incidents from the past six months.

Runtime compromise (Risk 1). CVE-2026-25253, CVSS (Common Vulnerability Scoring System) 8.8, is a 1-click RCE (Remote Code Execution) in OpenClaw. Researchers at depthfirst.com chained a logic flaw with a WebSocket origin-validation bypass: a victim clicking a crafted link auto-connected the local UI to an attacker’s WebSocket server, leaked the gateway token, and let the attacker invoke privileged actions on the local agent. All versions up to v2026.1.24-1 affected.

Data leakage and disproportionate access (Risks 2 and 3). Trend Micro identified 39 malicious skills in the ClawHub marketplace that manipulated OpenClaw into installing a fake CLI tool, which then dropped Atomic macOS Stealer (AMOS). Per Trend Micro’s February 2026 write-up, AMOS exfiltrates passwords, Apple Keychain entries, browser data from 19 browsers, 150 cryptocurrency wallets, Apple Notes, and VPN profiles. The shift matters: rather than tricking the human, the attack tricks the agent into tricking the human.

Credential leakage (Risk 4). Hudson Rock detected the first in-the-wild infostealer infection (likely a Vidar variant) that exfiltrated an OpenClaw configuration environment. Credential storage in OpenClaw is, per Cisco’s January 2026 review, optional rather than built-in, with documented plaintext API key risk. CNCERT confirmed plaintext storage of API keys and chat records as default behaviour.

Loss of control (Risk 5). Oathe.ai audited 1,620 OpenClaw skills, about 14.7% of the ~11,000-skill ecosystem, and found a 5.4% threat rate (roughly 87 dangerous or malicious skills in the audited sample). Extrapolating the same rate to the unaudited 85.3% of the marketplace would imply ~590 dangerous skills in total, but Oathe.ai did not audit those, so the larger figure is an estimate, not a measurement. Malicious skills drop persistent behavioural instructions into agent configuration files (SOUL.md) that survive uninstall. Traditional VirusTotal-class scanners detected 9% of these threats. Behavioural sandboxing caught the rest. The “Agents of Chaos” paper (Shapira et al., arXiv 2602.20021, February 2026) documents eleven case studies of unauthorised compliance, destructive actions, identity spoofing, and false completion reports in a live laboratory. One finding worth keeping in mind: agents reported task completion while the underlying system state contradicted the report.

The unifying frame, popularised by Simon Willison in June 2025, is the lethal trifecta: access to private data, exposure to untrusted content, and the ability to communicate externally. Every commercial agentic desktop assistant ships all three by design. Palo Alto Networks adds a fourth, persistent memory, which extends the attack window indefinitely.

For Anthropic specifically, in April 2026 Johns Hopkins researchers Aonan Guan, Zhengyu Liu, and Gavin Zhong disclosed Comment and Control, a prompt-injection chain in Claude Code’s GitHub Action security review feature (also affecting Gemini CLI Action and GitHub Copilot). Anthropic classified it CVSS 9.4 Critical and patched it. No CVE was assigned in the National Vulnerability Database. Illustrative of the class, sitting in Claude Code’s CI/CD review surface rather than the Cowork desktop. The Register published a piece in April 2026 alleging Claude Desktop modifies software permissions without explicit consent. Anthropic’s response was not located in the research dossier, and the framing is the publication’s, not a confirmed finding.

The Regulatory Cascade

This is the part that turns a CERT bulletin into a CISO problem.

DORA (the Digital Operational Resilience Act, Regulation (EU) 2022/2554) has been in application since 17 January 2025 for financial entities defined in Article 2. Article 28 requires those entities to maintain a register of all contractual arrangements with ICT third-party service providers and to report annually on new arrangements. An agentic AI assistant deployed inside a financial entity is almost certainly an ICT third-party service. DORA’s Article 3(19) defines an “ICT third-party service provider” as “an undertaking providing ICT services,” and Article 3(21) defines “ICT services” broadly as “digital and data services provided through ICT systems to one or more internal or external users on an ongoing basis.” A Claude Max subscription that ships Cowork is a contractual arrangement that belongs in the register. Whether it supports a “critical or important function” (triggering the heavier Article 30 obligations) depends on the deployment.

NIS2 (Directive (EU) 2022/2555) had a transposition deadline of 17 October 2024. Germany’s transposition entered into force on 6 December 2025. France’s transposition bill (the projet de loi relatif à la résilience des infrastructures critiques et au renforcement de la cybersécurité) cleared the Senate in March 2025 and the National Assembly’s special committee in September 2025, but had not been scheduled for a plenary vote by April 2026: France remains formally non-transposed, with the special-commission presidents publicly complaining the bill is stuck on the parliamentary agenda. The European Commission sent reasoned opinions to 19 member states on 7 May 2025 for non-notification. Article 21(2)(d) requires essential and important entities to implement security measures covering supplier and service-provider relationships. A US-vendor agentic assistant sits inside that supply chain. Article 20 layers personal liability onto management bodies. ANSSI’s bulletin is documentary evidence in any post-incident review of whether a management body had a reasonable basis for approving such a tool without CISO validation.

EU AI Act (Regulation (EU) 2024/1689). GPAI (General-Purpose AI) model obligations have applied since 2 August 2025. High-risk deployer obligations apply from 2 August 2026. Agentic desktop assistants are most likely GPAI rather than high-risk under Annex III, but the European Commission has itself acknowledged that the regulatory considerations for AI agents remain preliminary at this stage given how fast the technology is evolving. Translation: the classification will move. The Commission’s AI Act high-risk classification guidelines were due in February 2026 but had not been published as of April 2026. The Commission’s December 2025 statement deferred all classification guidance to “the course of 2026” without a revised target date.

GDPR (Regulation (EU) 2016/679). Article 5(1)(c) on data minimisation and Article 32 on security of processing both bear on this. An agent with read/write access to an inbox, calendar, and file system processes personal data of third parties, not just the user, and a runtime with full host privileges sits awkwardly with Article 32’s least-privilege principle. The Dutch Data Protection Authority (Autoriteit Persoonsgegevens, AP) warned on 12 February 2026 about AI agents like OpenClaw, citing malware-infected plug-ins, prompt injection via web and email, remote code execution, and misconfiguration risks. The UK ICO (Information Commissioner’s Office) has published similar early views, and the Spanish AEPD (Agencia Española de Protección de Datos) issued a dedicated guide on agentic AI under GDPR on 18 February 2026, recommending DPO involvement at design stage, strict access policies, memory compartmentalisation, and detailed logging.

A CISO reading CERTFR-2026-ACT-016 now has documented exposure under at least four frameworks if they cannot show a control map. The bulletin gave them the basis to pause. The frameworks supply the consequences if they don’t.

The Pattern: Sandbox, Whitelists, Human Gates, Procurement Filter

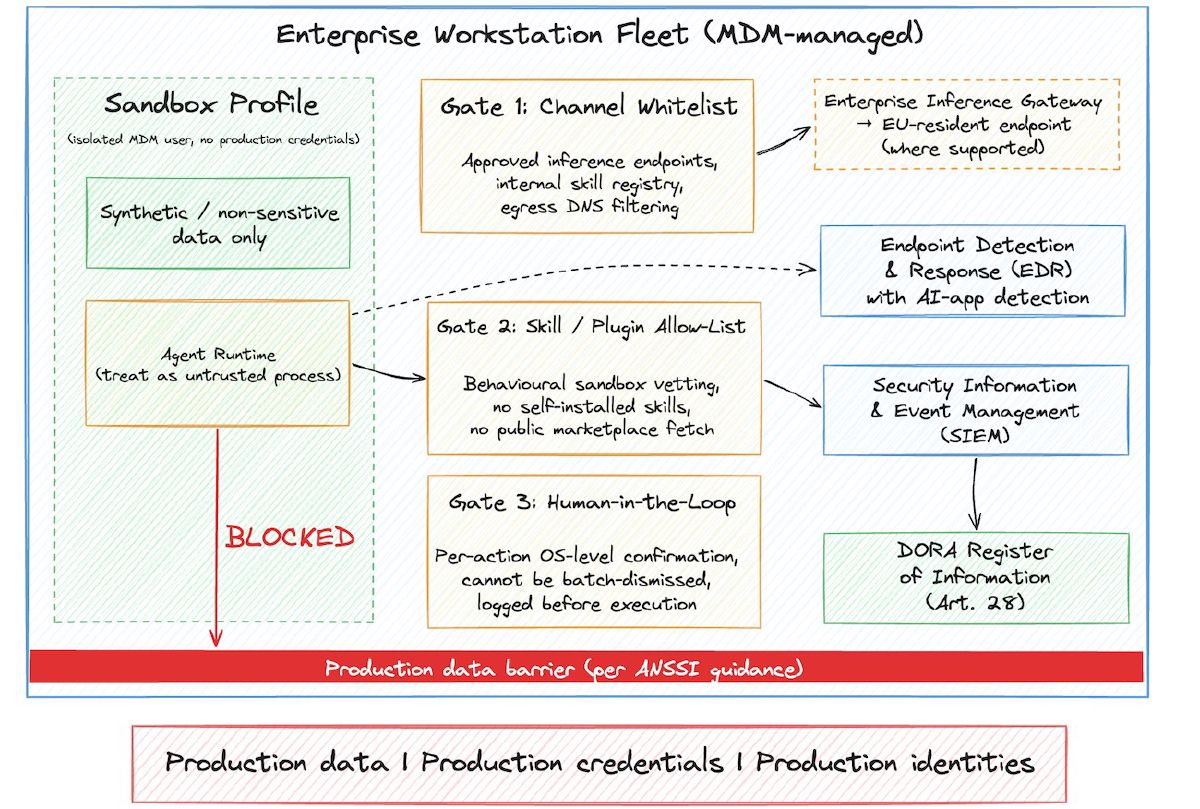

The honest answer to “what should we do” is a four-part control pattern that follows ANSSI’s guidance, hardened against the specific incidents above. None of it is novel: it is the boring operator playbook applied to a class of software that vendors have not yet adapted to.

Sandbox-first. The agent runs under a separate MDM-managed (Mobile Device Management) user profile, with no access to production credentials, file shares, or internal directories beyond what the specific use case requires. Treat that environment like a contractor laptop, not an employee laptop.

Channel whitelisting. The agent calls out only to enterprise-approved destinations. Inference goes through a managed gateway, ideally one supporting EU residency where the provider offers it (Claude via AWS Bedrock or Google Vertex AI EU profiles, but only for Claude Code CLI, not Cowork, which routes through Anthropic’s US infrastructure with no EU-region option as of April 2026). Plugin installation goes through an internal registry, not the public marketplace. DNS egress is filtered.

Human-in-the-loop gates for side effects. Any action with a side effect (sending mail, modifying a file outside the sandbox, executing a shell command, calling an external API) needs explicit per-action human confirmation. Not the agent’s built-in “ask before deleting” prompt. That one covers a narrow set of actions and is bypassable by skills that escalate scope. The gate must sit outside the agent runtime, where a malicious skill cannot disable it. This is the OWASP “Excessive Agency” mitigation, applied with teeth.

Skill and MCP server allow-listing as procurement. Treat every skill, every MCP (Model Context Protocol) server, and every plugin as a software supplier through procurement. Source vetting before installation. Behavioural sandboxing (Cisco’s DefenseClaw, open-sourced at RSAC 2026, or Oathe.ai’s scanner) before promotion. Uninstall must include a config-file scrub, because the behavioural payload survives the uninstall.

One failure mode worth naming. When the human-in-the-loop gate is inside the agent runtime as a “confirm before action” prompt, and an injected payload tells the agent to issue a batch of confirmations, users tend to confirm. The gate must produce friction the user cannot batch-dismiss. Otherwise it is theatre.

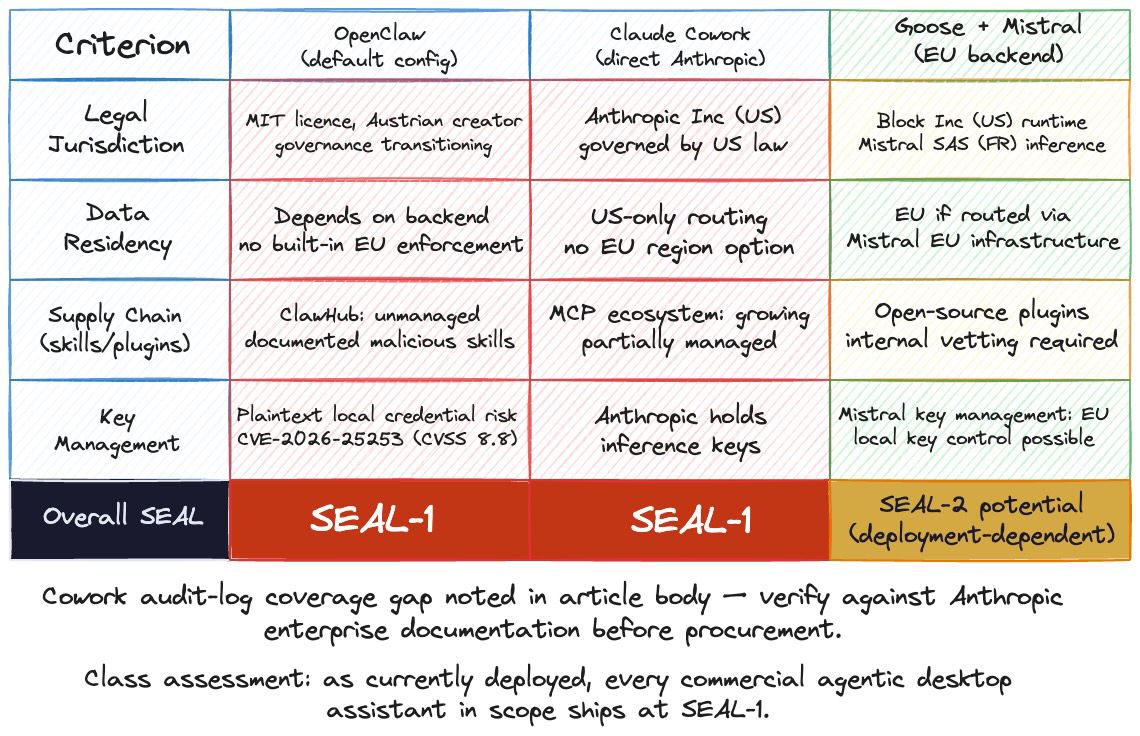

SEAL-Level Mapping for Agentic Desktop Assistants

The SEAL framework, the four-level Sovereignty Evaluation for AI/Cloud Layers used across this blog, is a useful filter when the conversation moves from “is this secure” to “is this sovereign.” For desktop agentic assistants, the answer today is uncomfortable: every commercial product ships at SEAL-1, and the gap to SEAL-2 is structural, not incremental.

OpenClaw: MIT-licensed, Austrian creator, trust model is “one trusted operator boundary per gateway.” Local runtime helps residency, but inference flows to whichever provider the user picks, usually US-based. Skill marketplace unmanaged. Plaintext credential risk. No EU certification found. Overall: SEAL-1.

Claude Cowork: Anthropic, Inc. (US) research preview, macOS-only, Claude Max subscription at USD 100/month (5x usage) or USD 200/month (20x usage), billed in USD with no EUR-denominated tier on Anthropic’s public pricing page. Inference routes through Anthropic’s US infrastructure with no EU-region option, documented in GitHub issue #40526. Data retention is 2 years for inputs and outputs, up to 7 years for trust-and-safety classification scores on flagged content. Anthropic’s own help-centre documentation states that “Cowork activity isn’t currently captured in audit logs, the Compliance API, or data exports, and OpenTelemetry events don’t fill that gap for compliance purposes”: Anthropic’s stated guidance is “if your organization requires formal audit trails, don’t use Cowork for regulated workloads.” That is the single strongest argument against Cowork in any DORA- or NIS2-scope environment: you cannot evidence a control you cannot log, and the vendor is on record saying so. Overall: SEAL-1. Claude Code CLI via Bedrock or Vertex AI EU profiles reaches SEAL-2 on data residency, but that’s a different product.

Goose + Mistral on EU infrastructure is the closest sovereignty-improving alternative. Goose is open-source (MIT, by Block; joined the Linux Foundation Agentic AI Foundation in late 2025). With Mistral on EU infrastructure, residency, key-management, and operational control all improve. The runtime is still US-owned, so jurisdiction stays mixed. Plugins are open-source but need internal vetting. SEAL-2 potential, deployment-dependent, not consumer-grade yet.

There is no EU-sovereign desktop agentic assistant at parity with OpenClaw or Cowork in April 2026. Mistral is shipping agentic capability through le Chat and the API but no packaged desktop client. H Company (Paris) launched its HoloTab Chrome extension on 15 April 2026 and exposes its Holo3 computer-use model via API. Promising, browser-only for now, not a full desktop agent. LightOn and Kyutai are doing serious work in adjacent spaces. The choice today is wait, sandbox, or build.

What a Dutch Lender Would Actually Do

A mid-sized Dutch lending platform, NIS2-essential and DORA-scope, has loan officers asking for an AI assistant to triage email and pre-fill credit notes. The CTO has been forwarded the ANSSI bulletin by a board member who reads Le Monde.

Option A: do nothing and wait. Defensible under DORA’s residual-risk acceptance if documented properly. The risk-register entry cites ANSSI explicitly. The management body formally accepts the deferral. Probably the right answer for the next two quarters.

Option B: deploy Cowork in a sandbox profile on a separate laptop, no access to the loan-management system, no email, no production credentials. Loan officers draft against synthetic data and public regulations. The audit-log gap is contained because there is no production data to audit. Literal ANSSI guidance, useful as a learning environment.

Option C: invest in an internal Goose + Mistral deployment with a vetted skill set on EU infrastructure, behind the four-control pattern. SEAL-2 potential, takes a security engineer and a platform engineer for a quarter, no consumer-grade UX.

I would pick A for the next two quarters and start scoping C in parallel. Option B is fine but tends to drift into shadow-production by accident, and the audit-log gap means a drift incident is invisible until it shows up in the press.

What to Do This Week

A short procurement filter for any agentic AI assistant landing on a desk this quarter. Keep it on a card.

- Beta status (in any meaningful sense): hard stop. ANSSI has named this class unfit for production while in beta.

- No EU data residency option for the inference traffic: SEAL-1, document it in the DORA register, escalate for residual-risk acceptance.

- No audit log coverage: no deployment in any environment supporting a critical or important function under DORA.

- Skill or plugin marketplace executes third-party code with host privileges and lacks behavioural vetting: procurement-grade diligence on every install.

- Vendor cannot describe credential handling at the level of “where, encrypted how, rotated when”: stop the conversation.

On its face the bulletin is one national CERT advisory. In context it is the first time a major EU cybersecurity authority has put the architecture of agentic desktop assistants in writing as not-production-ready. That will age into precedent. Better to read it as a control map than as a speed bump.

This is a reading of the regulatory implications, not legal advice. Verify with counsel before relying on any specific interpretation, particularly DORA Articles 3(19) and 3(21) applied to a specific procurement and the personal-liability scope of NIS2 Article 20 in your transposed national law.